The combination of powerful 3D graphics, outstanding computer vision capabilities and optimized video capture to form single chip SoC solutions is key to the success of future parking assistance solutions that include surround view systems using multiple cameras. The second SoC generation from Renesas, called R-Car, aims at providing the appropriate solution to enable ready to use advanced 3D surround view applications and offer the driver an immersive and safe experience.

Key words: ADAS, 3D surround view, 3D graphics, computer vision, image recognition, structure from motion, Ethernet AVB, video compression, low latency

Author: Simon Oudin, Senior Marketing Engineer, Renesas Electronics Europe

INTRODUCTION

Surround view monitoring will become a common functionality in cars. This feature is part of the parking assistance system. From a niche market first driven by Asian car makers, it has become an option offered by the majority of car manufacturers, with the consequence of a higher requirement in terms of driver experience and solution scalability.

Renesas, as a lead SoC vendor for infotainment and ADAS applications, is already a major player for supporting surround view requirements in their early phase. Today, Renesas provides a new generation of SoC to answer global market needs with a scalable and innovative approach.

SURROUND VIEW WITH R-CAR GEN2 FAMILIE

The purpose of surround view monitoring is to display a panoramic view of the car’s immediate surroundings. This representation, at 360 degrees with 2D perspective from the sky, is called “bird view” or “top view”. The different views are stitched together thanks to the correct geometric alignment of the cameras. The brightness and colour of the different cameras’ videos are modified for the harmonization of the surround view [1] [2].

Nevertheless, displaying only this representation does not generally help the driver during the parking process. To facilitate this manoeuvre, additional information can be shown to the driver as 2D overlays or rear view [1]. A complementary approach is to improve the driver apprehension of the distances with

a 3D representation of the car’s surroundings. The target is to use 2D cameras around the car to create a 3D comprehensive representation of its immediate vicinity with a 3D generated car as a driver perspective reference. It should reflect a realistic representation of the distances to nearby elements (pedestrians, cars and buildings). The 3D sphere perspective should dynamically change according to the car movement. The model car has to be properly integrated in the overall scene with light or reflection on the model car [2].

This level of application drives the performance required in terms of 3D graphics and computer vision in an automotive embedded platform. Renesas created the SoC family called R-Car in order to enable this level of applications. The second R-Car generation was first officially released in March 2013 and supports a wide variety of applications, such as connectivity, entertainment expansion and ADAS. This family provides outstanding performance with optimal power consumption capabilities [3] and a common API for reducing customer development efforts. From this family, two devices support surround view application: the R-Car H2 and the R-Car V2H.

3D IMMERSIVE EXPERIENCE WITH R-CAR H2

R-Car H2 is the first device released in March 2013 and tailored to integrated cockpit solutions with the 3D surround application. For this utilisation, we first need to consider 3D graphic engine performance requirement. We should particularly pay attention to the two parts of the scene: the texture mapping of the 2D camera images on a 3D sphere and the 3D car representation.

The polygon count of the scene depends on the deformation of the 3D sphere and the rendering effects on the car model. For better rendering, the graphic engine must be able to process a significant polygon count in a short time. Moreover, as the

application can use different shader programs for one scene, the graphic engine must come with a powerful shader engine. Those performance requirements must be supported by a high GPU frequency, which will allow fast data processing. All these performance aspects justify Renesas’ decision to integrate an outstanding 3D graphics engine in R-Car H2. Indeed, its 3D graphic engine provides similar performance than the latest iPad Air 3D graphic engine.

THE PATH TOWARDS AUGMENTED REALITY

The sensing of the scene in 3D is the other important aspect required to provide easy to understand content. This can be achieved with two techniques. The first is human-like stereo vision, although it has the disadvantage of double the camera cost and integration effort. The other option is to create the Structure from Motion (SfM) of the car, thus providing stereo vision over time. Renesas has implemented vision-dedicated hardware accelerators into the R-Car family to power this algorithm on the four cameras in real-time, meeting both performance and low power consumption requirements.

The SfM algorithm issues a list of flow vectors representing the motion of the vehicle and surrounding objects. The next non-trivial task is to find the car’s egomotion by calculating the essential movement from flow vectors and matching the majority of them. From this fundamental matrix, flow vectors can be sorted corresponding to static and dynamic objects in the surroundings. Static object flow vectors directly provide the distance of the object inversely proportional to the flow length.

Figure 1 (a) shows an example running on R-Car H2. The circles represent the static feature points which are the outcomes of the structure computation. The colours correspond to the clustered objects which are then fed back to the model deformation. Those can then be used to adapt the 3D model of the environment in real time as shown in Figure 1 (b). Finally, a realistic representation of the car environment is created based on this 3D model mapped with the 3D sphere generated by the graphic engine.

ETHERNET: FLEXIBLE APPROACH WITH R-CAR V2H

The R-Car family also includes the R-Car V2H, which provides a unique video path approach from the camera video acquisition over the Ethernet network down to the display interface.

This pipelined approach not only releases the requirements to the rest of the system (e.g. overall latency, memory bandwidth and CPU intervention), but also drastically reduces the software development complexity for the system maker.

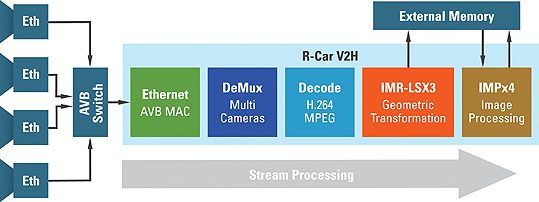

Figure 2 shows this special video path of the R-Car V2H. There is no external memory access from the four cameras demultiplexing to the geometric video transformation and each hardware accelerator is dedicated for one camera.

System cost reduction is a main aspect contributing to the higher adoption of the surround view. Cabling is a non-negligible portion of that. In the past year, two approaches have emerged to reduce current LVDS based surround view systems [4]. One uses Ethernet over an unshielded twisted pair, the alternative being an update of LVDS to cost-effective coaxial cables. Both approaches lead to a similar system cost. However, the Ethernet solution not only helps system cost reduction but also offers flexibility for future applications. For example, with the increasing adoption of drive recording systems, new features like multi-channel simultaneous video recording could be supported with very limited impact on costs, as only the SD card interface would be required. The other benefit of Ethernet over LVDS lies in the standardized approach from both MAC levels with AVnu Alliance and PHY level with Open Alliance.

OPTIMAL LATENCY VIDEO PATH

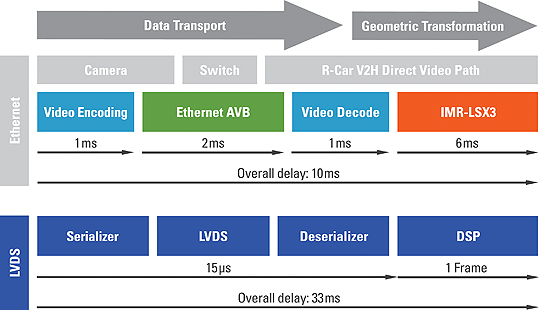

One of the main aspects requiring careful design is latency – in the transport including compression and decompression, as well as in the processing chain. Indeed, the overall latency from camera capture to display should be below 100ms in order to enable real-time perception to the driver.

Currently, cameras run at a frame-rate of 30 frames/sec. When using the global shutter, the sensor cells charge during the exposure time and all at the same time.

Then the imager starts to output pixel by pixel. Consequently, the last pixel is sent around 1 frame (33ms) after the capture. This is the first frame delay, which cannot be reduced. The other incompressible delay is for the display, where pixels must all be transmitted before they can be displayed, again around 33ms. Finally only 33ms remain to perform the rest of the tasks described in Figure 3.

The first item of the chain is the data transmission. The Ethernet protocol does not provide dedicated mechanisms to ensure low latency transport and camera synchronization. This is why Renesas introduced the first Gigabit Ethernet MAC with advanced AVB hardware support in the R-Car family [5]. This specific implementation provides the necessary hardware to reduce CPU load and optimize the overall compressed video reception. Some specific mechanisms have been implemented as intelligent packet decapsulation and camera video filtering. The multi-view camera applications are part of the AVnu Alliance AVB Automotive profile with fast start-up, low latency (maximum delay of 2ms) considerations for camera video [6].

The first multi-camera systems with Ethernet used low latency Motion JPEG (MJPEG) compression. This technology is based on the well-known JPEG standard widely used in consumer digital cameras. Nevertheless, the impact on quality video with this technology could limit the vision processing performance [7]. Consequently, Renesas considered H.264 compression technology to be the best solution for camera video transmission [8]. It provides a better compression ratio for improved vision processing performance [7] [9]. It has been also massively adopted in all consumers’ equipment that could be connected to the car through Renesas Infotainment connectivity solution. With the R-Car V2H, Renesas has implemented the first HD multi-channel, H.264 compliant, low latency decoder in an automotive SoC.

The ultimate step to reduce the latency is to decrease the latency in the processing portion. Indeed, traditional DSP based systems require a double buffering approach for the video capture. The R-Car V2H features a dedicated engine called IMR that processes the image geometric transformation on the fly. This feature supports direct streaming from up to 5 low latency video decoders. Thanks to the direct path in R-Car V2H, the overall latency in an Ethernet network is reduced in comparison with a classic LVDS approach, as shown in Figure 3.

REPRESENTATION AND DETECTION

The IMR is also capable of using a look-up table (LUT) to modify the viewpoint transformation to a 2D or 3D surround view representation on the fly.

The camera viewpoint can be modified for each input frame, enabling animated transition between the user’s viewpoints. Bilinear filtering is natively supported, providing excellent image quality. Thanks to this approach, the R-Car V2H natively supports 3D surround view with very low memory requirements. The R-Car V2H offers the same image recognition hardware as the R-Car H2. Consequently, it can also enable SfM computation or even pedestrian detection.

It is capable of detecting pedestrians for each of the four cameras in parallel, using histogram of gradient and support vector machine classification. This feature has been already demonstrated on the R-Car V2H during the Renesas Developer Conference last September in Japan, and at Electronica last November in Germany. Figure 4 shows this proof of concept [10].

CONCLUSION

In this article, we have presented the trend of automotive multi-camera applications focusing on the 3D surround view for parking assistance systems.

We have also introduced the scalable R-Car automotive SoC family. R-Car H2 is capable of creating 3D comprehensive representation of a car’s immediate surroundings to facilitate parking manoeuvres. In the R-Car V2H, a unique direct Ethernet video path has been introduced with Ethernet AVB MAC and multi-channel H.264 low latency decoder for ultra-low latency video processing and memory bandwidth reduction.

Considering that this application would be part of an autonomous parking assistance system, Renesas has already introduced key features to target an ASIL B at system level ■

References

[1] Mengmeng Yu and Guanglin Ma, Delphi Automotive “360° Surround View System with Parking Guidance”, May 2014

[2] M. Friebe, J. Petzold, “Visualisation Functions in Advanced Camera-Based Surround View Systems”, 2014

[3] Peter Fiedle, “Mehr Power weniger Leistungsaufnahme”, February 2014

[4] N. Noebauer, “Is Ethernet the rising star for in-vehicle networks?”, September 2011

[5] S. Oudin, N. Kitajima „Das zukünftige Ethernet-AVB Netzwerk“, November 2012

[6] AVnu Alliance White Paper “AVB for Automotive Use” http://www.avnu.org/knowledge_center, October 2014

[7] J. Forster, X. Jiang and A. Terzis “The Effect of Image Compression on Automotive Optical Flow Algorithms”. 2011

[8] T. Wiegand, G. J. Sullivan, G. Bjøntegaard, and A. Luthra, “Overview of the H.264/AVC Video Coding Standard”, Juli 2003

[9] T. Nguyen, D. Marpe, “Performance analysis of HEVC-based intra coding for still image compression”, May 2012

[10] http://am.renesas.com/edge_ol/topics/21/index.jsp

www.renesas.com

Simon Oudin is Senior Marketing Engineer for surround view applications in the newly created “Global ADAS Solution Group” at Renesas Electronics Europe.